Credit: Brad Chacos/IDG

Credit: Brad Chacos/IDG

Creative benchmarks

After witnessing how well Ampere performed in creative tasks versus Turing as part of our GeForce RTX 3090 review, we decided to test a few content creation benchmarks on the RTX 3070, too. Full-time creators would probably be better off investing in a more powerful GPU with more memory capacity and drivers optimized around professional applications, but if you want to do some video rendering on the side of your gaming, the RTX 3070 often tramples over the RTX 2080 Ti thanks to Ampere’s improved RT and tensor cores. Using the RT cores for motion blur acceleration isn’t a big deal in gaming (yet, at least), but it can make a world of difference in some creative workloads.

We’ve only recently started testing some creative workloads, so you won’t find the RTX 2070 compared here, nor many other GPUs. But these should show how the RTX 3070 stacks up against previous-gen flagships, including the RTX 2080 Ti and GTX 1080 Ti.

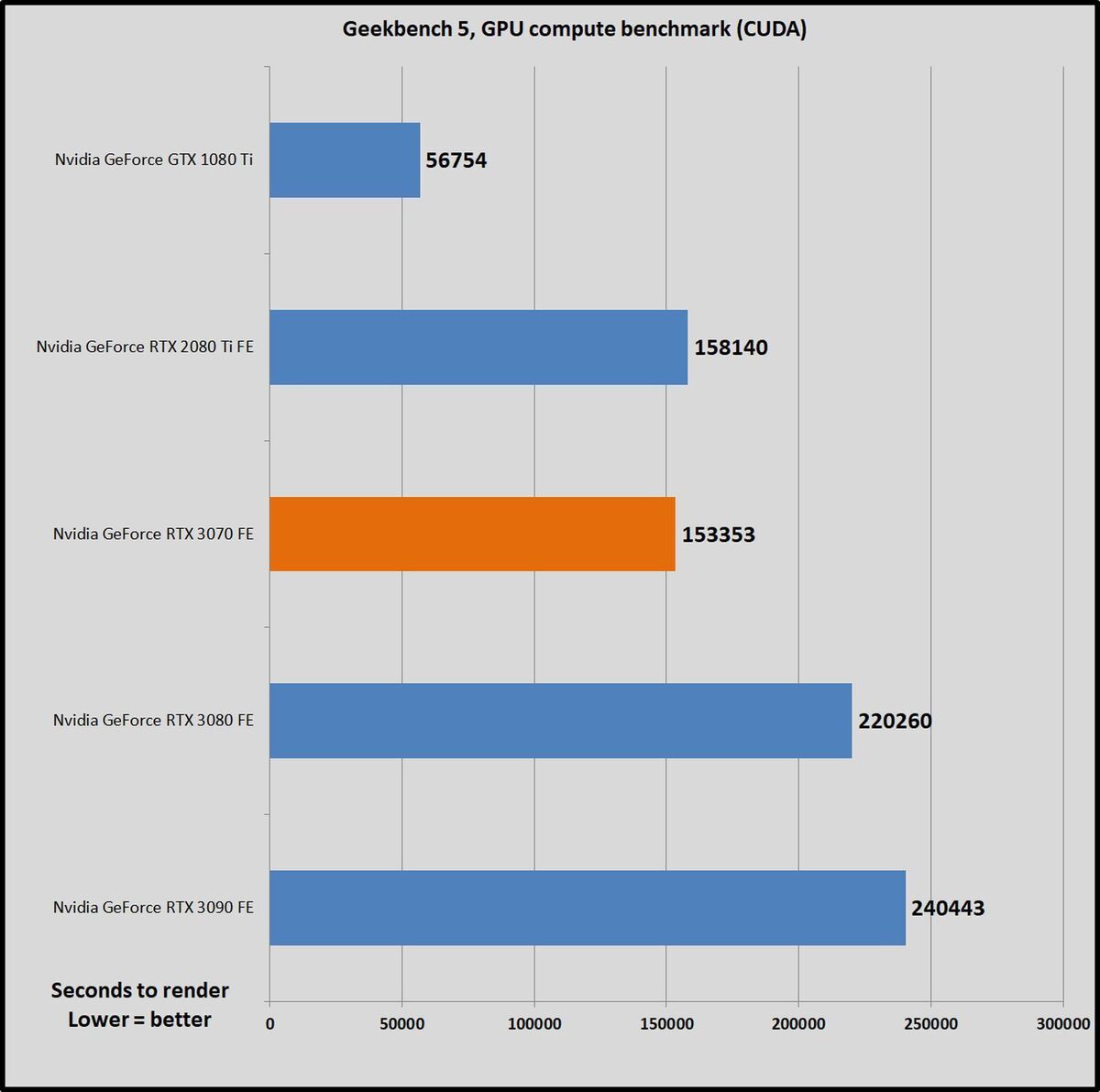

Let’s quickly start with Geekbench 5. This quick cross-platform benchmark measures GPU compute performance “using workloads that include image processing, computational photography, computer vision, and machine learning.” The app supports OpenCL and CUDA. Any GPU can run OpenCL, but CUDA requires specialized hardware and software from Nvidia, and delivers faster performance. We’ve focused on CUDA results here, so you’ll only see Nvidia GPUs compared.

Brad Chacos/IDG

Brad Chacos/IDGThe RTX 3070 is just behind the RTX 2080 Ti, but only barely. Let’s move on to more practical rendering benchmarks.

Luxmark 3.1 is a pure OpenCL benchmark based on the LuxRender v1.5 engine. It offers three different scenes to test. The “simple” Luxball HDR renders 217K triangles; the “medium” Neumann TLM-102 Special Edition renders 1769K triangles; and the “complex” Hotel Lobby renders 4973K triangles. Higher scores are better.

Brad Chacos/IDG

Brad Chacos/IDGThe $700 RTX 3080 stands head and shoulders above the others, but the RTX 3070 pulls ahead of the RTX 2080 Ti by a notable margin.

Nvidia’s software stack is a key ace in the hole. Many developers swear by CUDA software optimizations, and now that RTX is here, Nvidia’s been rolling out “OptiX” technology that leverages all those RT and tensor cores for creative purposes. Blender is a very popular free and open-source 3D graphics program used to create visual effects and even full-blown movies. In 2019, Blender integrated Nvidia OptiX into Cycles, its physically based path tracer for production rendering, to tap into GeForce’s RT cores for hardware-accelerated ray tracing.

We tested Blender using the Blender Open Toolkit. We tested two scenes: Classroom and Victor, with the latter being the most strenuous scene the benchmark offers. AMD’s Radeon VII consistently crashed when trying to run Victor, but worked fine with Classroom. We tested each graphics card with the best-performing GPU acceleration possible for that card. That’s OpenCL for the Radeon VII, CUDA for the GTX 1080 Ti, and OptiX for the GeForce RTX cards. The results show total rendering time, so lower is better.

Brad Chacos/IDG

Brad Chacos/IDGOnce again, the RTX 3070 finishes its tasks at a much faster clip than the RTX 2080 Ti. The advanced motion blur acceleration inside Ampere is pulling its weight here. If you render 4K or 8K videos, though, the larger 11GB memory capacity of the RTX 2080 Ti might wind up more useful than the faster render times provided by the 8GB RTX 3070.

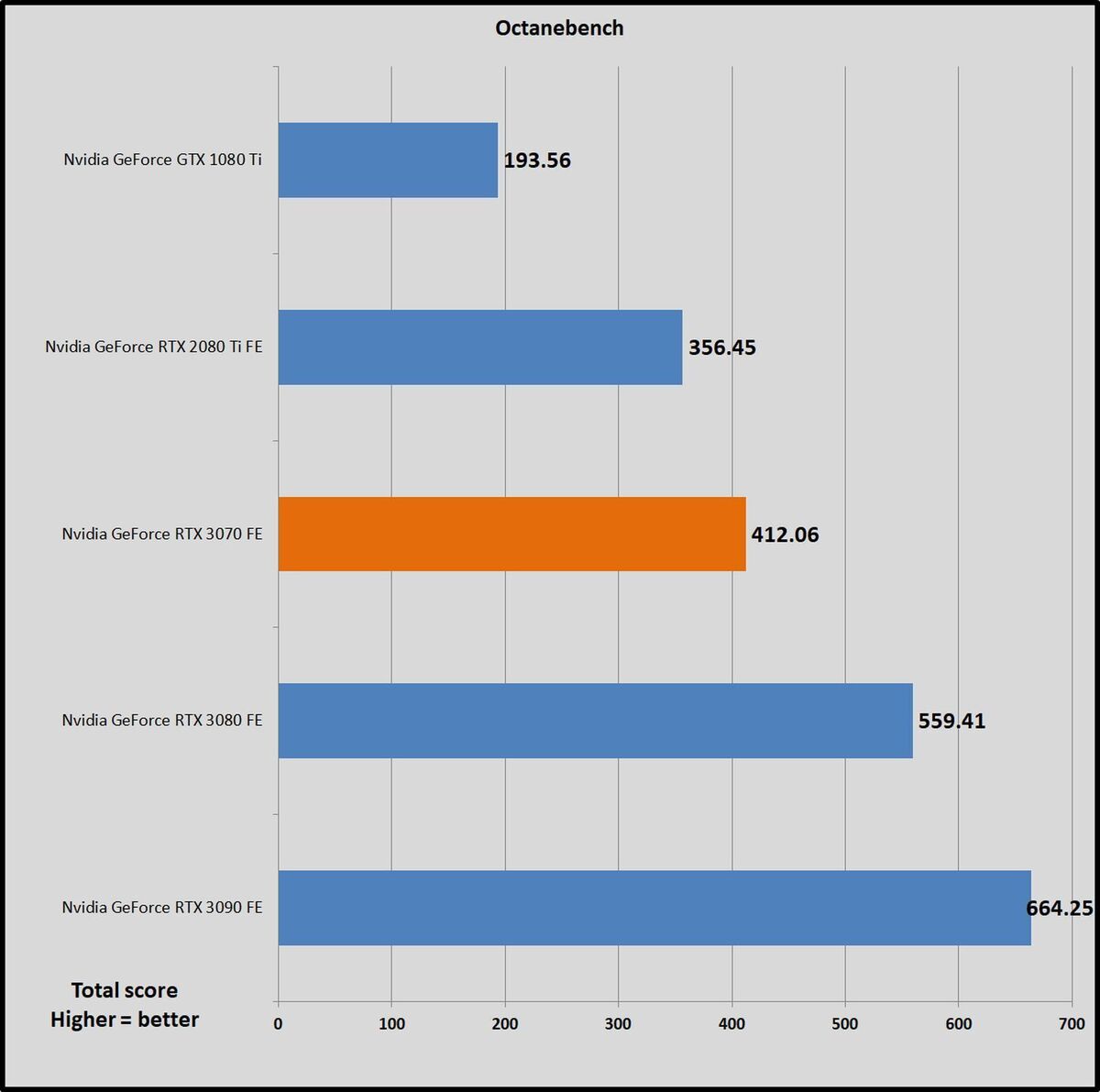

OctaneBench 2020 v1.5. is a canned test offered by OTOY to benchmark your system’s performance with the company’s OctaneRender, an unbiased, spectrally correct GPU engine. OctaneBench (and OctaneRender) also integrate Nvidia’s OptiX API for accelerated ray tracing performance on GPUs that support it. The RTX cards do; the GTX 1080 Ti again sticks to CUDA. The benchmark spits out a final score after rendering several scenes, and the higher it is, the better your GPU performs. OctaneRender requires CUDA or Optix, so again, you won’t find the Radeon VII here.

Brad Chacos/IDG

Brad Chacos/IDGThe creative conquering is complete. After witnessing back-and-forth results in games, if Nvidia wants hard proof that the $500 GeForce RTX 3070 is faster than the $1,200 RTX 2080 Ti, the company can point to its rendering chops.

Next page: Power, thermals, and noise